Edge AI GPU Computing Architecture: High-Performance Expansion vs. Rugged Embedded Solutions

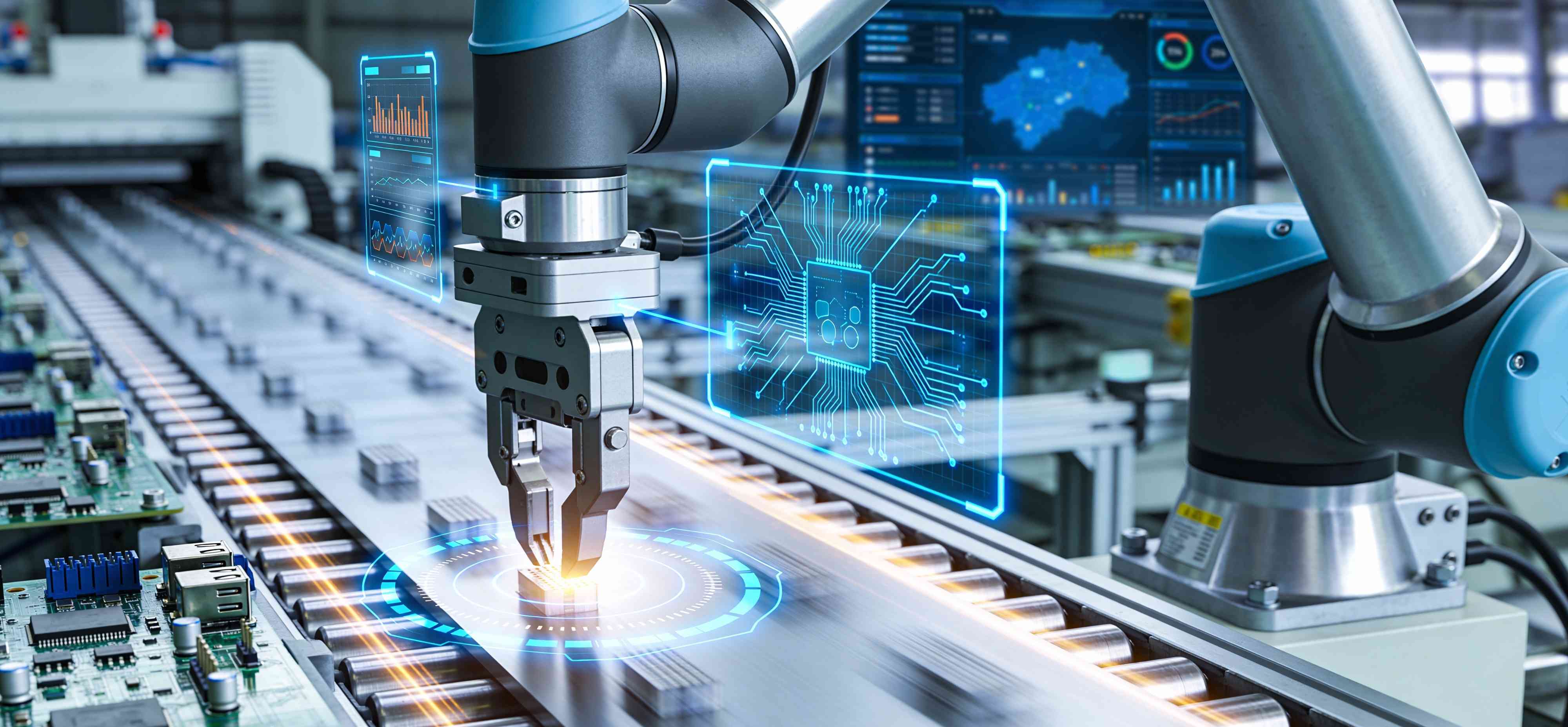

Selecting the right Edge AI GPU computing architecture is the most critical decision for ensuring mission success at the rugged edge. Whether your deployment demands the scalable raw power of a PCIe-based expansion system or the compact resilience of an embedded module, the decision directly impacts long-term operational stability. In the rapidly evolving sector of autonomous robotics hardware, hardware DNA must be precisely aligned with specific environmental and computational needs to future-proof AI frontiers—from smart transit to complex defense systems.

Expansion vs. Embedded Edge AI: Strategic Optimization for Your Mission

The fundamental choice between Expansion vs. Embedded Edge AI architectures hinges on the trade-off between absolute computational throughput and power-efficient resilience. A rugged embedded system is designed to balance heat dissipation and processing speed within a strict power budget, offering a cohesive, low-profile footprint essential for battery-operated mobile platforms. Conversely, expansion-based architectures provide a modular path to upgrading specialized accelerators, allowing for high-bandwidth inference in environments where the complexity of neural networks requires desktop-grade GPU performance. By understanding these trade-offs, operators can ensure that their edge intelligence remains resilient regardless of electrical fluctuations or extreme mechanical stress.

High-Performance AI Inference: The Power of ABOX PCIe Expansion

For applications where data processing demands are paramount and the power budget allows for high-wattage performance, the ABOX Series serves as a high-performance command center. By utilizing an x86 architecture paired with PCIe GPU expansion, this architecture excels in high-performance AI inference for tasks such as high-speed rail track inspection or real-time multi-stream 8K analytics. This system is specifically engineered to support high-tier GPU modules, delivering hundreds of AI TOPS to handle complex data fusion from multiple high-resolution sensors simultaneously. Beyond raw speed, the integration of 10GbE and 2.5GbE PoE+ connectivity ensures that heavy-duty machinery—such as autonomous mining trucks or port cranes—can maintain mission-critical readiness without latency bottlenecks.

Autonomous Robotics Hardware: The Resilience of IBOX Embedded Systems

Conversely, the IBOX Series represents the pinnacle of mobility-focused edge intelligence, built primarily on the NVIDIA Jetson AGX Orin platform. As a cornerstone of modern autonomous robotics hardware, these systems offer an exceptional performance-per-watt ratio, providing up to 275 TOPS of AI power within a fanless, space-constrained chassis. The technical advantage of this embedded architecture lies in its native GMSL2 camera support, which delivers ultra-low-latency video feeds necessary for precise object recognition and driverless navigation. For deployments in the most hostile outdoor conditions, these units can be configured as an IP66 waterproof computer utilizing M12 connectors, effectively sealing the electronics against moisture and dust while maintaining high-speed AI processing.

Field Application: Precision Engineering for Every AI Frontier

Choosing the right Edge AI GPU computing architecture depends on the specific stressors and energy constraints of the operational environment. In sectors like heavy-duty industrial automation and mobile command centers, where space is available but the AI models are massive, the ABOX Expansion Series provides the necessary computational redundancy to process complex SLAM and surround-view algorithms without throttling.

In contrast, for last-mile delivery robots and smart farming machinery, the IBOX Embedded Series is the strategic fit. These applications prioritize battery life and physical endurance; the SoC (System on Chip) architecture reduces data transfer latency between the CPU and GPU while surviving the constant micro-vibrations and unpredictable weather typical of outdoor service. Finding the ideal fit between Expansion vs. Embedded Edge AI ensures that edge deployments remain stable and secure, providing the reliable foundation needed for the next generation of autonomous technology.

Technical FAQ: Edge AI GPU Architecture & Implementation

Q1: What are the primary thermal considerations when utilizing PCIe GPU expansion in extreme environments?

A: High-performance GPUs generate significant heat, requiring a robust thermal management system. SINTRONES’ ABOX series utilizes a patented fanless design with high-efficiency heat pipes to dissipate heat from both the CPU and the PCIe GPU expansion card. This ensures stable operation in temperatures up to 70°C, preventing thermal throttling during intensive high-performance AI inference tasks.

Q2: How does GMSL2 camera support enhance the capabilities of autonomous robotics hardware?

A: GMSL2 camera support provides a high-bandwidth, serialized connection that allows for near-zero latency video transmission over distances up to 15 meters. This is superior to standard USB or Ethernet cameras for autonomous robotics hardware, as it allows the NVIDIA Jetson AGX Orin to process raw image data instantly for real-time obstacle detection and path planning.

Q3: Is an IP66 waterproof computer limited in its expansion or processing capabilities?

A: While an IP66 waterproof computer like the IBOX series is built for extreme sealing, it does not sacrifice core processing power. By using the NVIDIA Jetson AGX Orin module, it achieves up to 275 TOPS of AI performance. The use of M12 connectors ensures that high-speed I/O and GMSL2 feeds remain waterproof without compromising the signal integrity required for a rugged embedded system in outdoor environments.